Training and results

ML-Agents training

One of the most essential parts of training is hyperparameter tuning. Effective training using RL requires to setup up hyperparameters properly. In ML-Agents all hyperparameters are specified in trainer_config.yaml file. Here you can find definitions for all hyperparameters. Below you can see the trainer_config.yaml file with hyperparameters config used to train our creature:

default: trainer: ppo batch_size: 2024 beta: 5.0e-3 buffer_size: 20240 epsilon: 0.2 gamma: 0.995 hidden_units: 128 lambd: 0.95 learning_rate: 3.0e-4 max_steps: 700000 memory_size: 256 normalize: true num_epoch: 3 num_layers: 2 time_horizon: 1000 sequence_length: 64 summary_freq: 3000

Evolutionary programming training

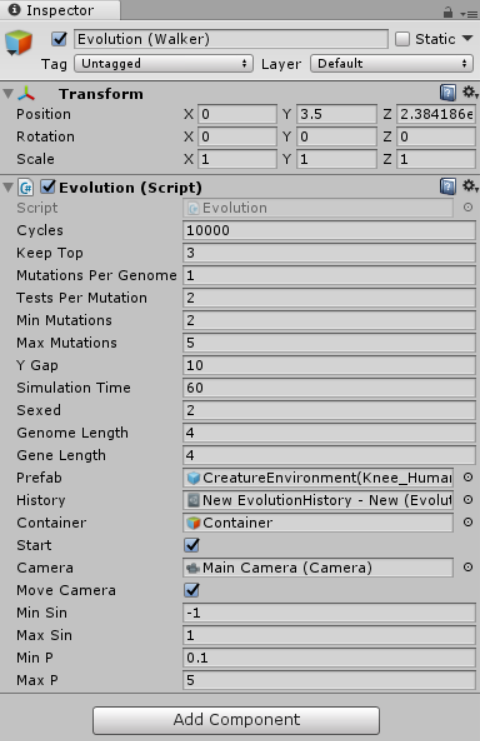

The whole training process is defined in Evolution script, where environment-wide parameters are specified. Below is the screenshot of parameters used to train the creature using EP:

Results

ML-Agents

Well, after training our agent for almost 1 hour 51 minute, we can finally look at the results. Also, I should mention, that training using ml-agents can be sped up to 100 times (and it was). So you don’t have to wait months till you have something working.:

I’ve measured the speed of those creatures, and on average they gained about 271 units/min. Also, as you can see, the creature is very stable, and moves are dynamic. When the creature is very close to falling, it stops and arranges its limbs and body, so it doesn’t happen. Then, continues on walking.

Evolutionary programming

It took 50 minutes for Evolutionary programming to reach its peak speed. It was 79 units/min. Training using EP has been done in the editor itself, so unfortunately, I couldn’t speed up training process above 30 times without performance consequences. The speed up was done by addingBut, here’s the result:

You can clearly see the difference with the model trained by ML-Agents. The creature has taken a much more stable and safe position where the body is close to the ground, preventing unstable behavior. The steps are also very short.

Experiment: no punishment for falling

In the end, I wanted to make an experiment where falling wouldn’t be punished. The agent could move in any way, where originally falling restarted the simulation. This is a result of training the agent with ML-Agents. The agent’s average speed is 145 units/min.

Evolutionary programming algorithm managed to make an agent move at the speed of 820 units/min. But, it’s hard to call this “walking”.

In the end, here’s a table comparing all those models:

| Speed [units/min] | Training time | Training time acceleration | |

| PPO (ML-Agents) | 271 | 1 h 51 min | X100 |

| PPO (ML-Agents) (no punishment) | 145 | 5h 39min | X100 |

| Evolutionary Programming | 79 | 50 min | X30 |

| Evolutionary Programming (no punishment) * | 820* | 16 min | X30 |

* locomotion is unnatural

Summary

So, obviously, looking at the results, you could say ML-Agents’ model of walking is way more natural and good looking. Yes, it is! Although, it takes time to train, you’ll get much better and stable result by using neural networks in such tasks.

In this project the creature was trained on a plain terrain, which simplified the task for both algorithms. But it’s certain, that evolutionary programming could not compete with Reinforcement learning in more difficult tasks. Also, the experiment was done using 2D environment. Making it 3D, would extremely complicate training for evolutionary walking or make it impossible at all.

ML-Agents is a great plugin that allows developers to create AI on the level which was impossible before, or at least simplify the process of its development. It’s really great to see Unity catching up to such things making such advanced tools easy to use.